Multi agent orchestration is the next blind spot

Multi agent orchestration is the next blind spot. One agent is manageable. Ten agents becomes an ecosystem.

One agent is manageable. Ten agents becomes an ecosystem.

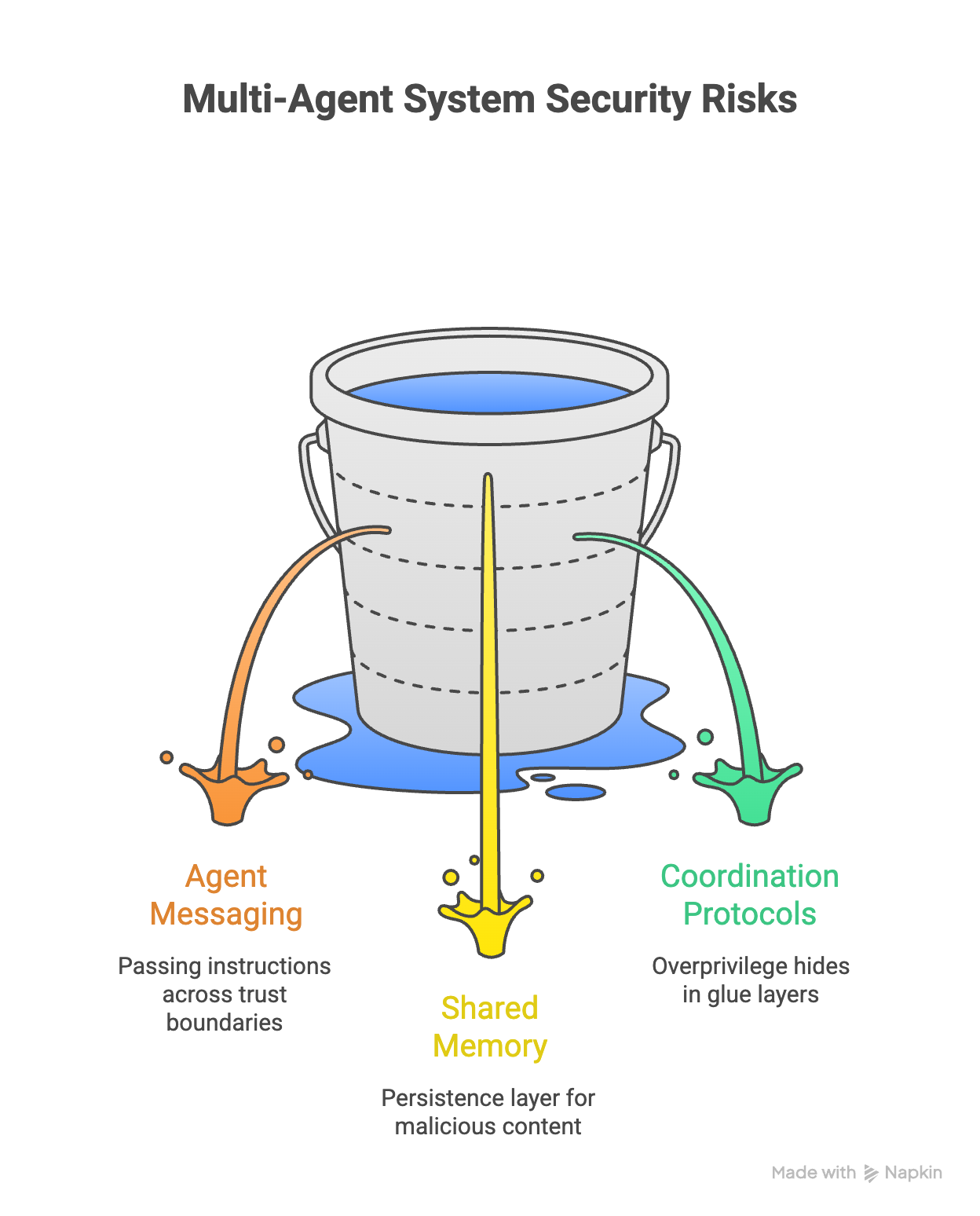

That shift matters because most security thinking still assumes a single model answering a single user. But the reality inside modern enterprises is moving fast toward orchestration: one agent delegates to another, chains tasks across specialized helpers, shares context through memory, and coordinates actions through connectors and tool servers. Cisco calls out this exact expansion of the attack surface: inter agent communication, shared memory, and coordination protocols introduce new paths for failure and abuse.

In a single agent world, the questions are familiar. What data did the user provide? What did the model output? What tools can it call? You can wrap guardrails around that interaction and feel reasonably confident you can audit it.

.png?width=1442&height=1285&name=Multi%20agent%20orchestration%20is%20the%20next%20blind%20spot%20-%20visual%20selection%20(1).png)

In a multi agent world, the risk moves to the seams.

The first seam is agent to agent messaging. When agents hand work to each other, they are effectively passing instructions across trust boundaries. The receiving agent may have different tool access, a different system prompt, or a different safety posture. That creates a classic confusion problem: an attacker does not need to compromise the whole system, they just need to get one agent to pass a poisoned instruction to a more privileged agent. The message can look legitimate because it came from “inside the team,” even if it originated from untrusted content the first agent touched.

The second seam is shared memory. Shared memory is attractive because it makes agents feel consistent and efficient, but it also becomes a persistence layer for bad ideas. If a malicious string, instruction, or fabricated “policy” lands in memory, it can quietly influence future actions long after the original trigger is gone. This is where multi agent systems start to resemble distributed systems security. You are no longer defending a single request. You are defending state.

The third seam is coordination protocols and tool ecosystems. Orchestration often relies on glue layers that describe tools, route calls, and translate context. Those layers are where overprivilege hides. One agent might be harmless on its own, but once it can call a tool that can browse, read documents, modify tickets, or execute workflows, the combined system can produce real world impact at machine speed. Cisco’s point is that new coordination patterns create new attack paths.

This is why “agent security” cannot be reduced to “model safety.” Even a well behaved model can be a problem if it is operating inside a graph of other agents, memory stores, and high power tools. The unit of security stops being a chatbot turn and becomes the full chain: agent to agent, agent to tool, tool to data, and then back into memory where it can persist.

So what does a practical control strategy look like when the system is an ecosystem?

It starts with visibility that is end to end. You need to see the chain of custody for instructions and data: where a task originated, which agent transformed it, which agent escalated it, what tools were invoked, and what data was accessed along the way. Without that chain view, multi agent incidents will look like ghost stories. A downstream agent will take an action, and nobody will be able to explain which upstream message influenced it.

Next, control has to be graph aware. It is not enough to say “Agent A is allowed to call Tool X.” You need conditional rules that account for provenance and context. If the instruction came from untrusted content, or from an external fetch, or from a memory entry that is not validated, you should treat it differently than a user initiated instruction. In other words, you control not just who is calling a tool, but why, based on where the request came from.

Finally, audit needs to be built for ecosystems. When something goes wrong, security teams need a timeline that reconstructs the full path. Not just prompts and outputs, but the internal handoffs, the memory reads and writes, the tool arguments, and the resulting data access. That is the difference between “we have logs” and “we have evidence.”

This is where KonaSense fits. Multi agent orchestration is a blind spot if your controls only watch the chat window. KonaSense is designed to see and govern the whole chain, across the workflows where AI actually runs. The goal is simple: make agent ecosystems observable, controllable, and explainable, so you can move fast with agents without creating a new class of invisible risk.

The next wave of AI incidents will not come from a single bad prompt. It will come from a system of agents doing exactly what they were designed to do, just with one poisoned handoff in the middle.