Treasury just raised the bar on AI risk. Here is the practical playbook.

Treasury's new AI guidelines aim to streamline adoption in financial services by standardizing risk management and governance practices. Learn practical steps to align with the updated framework.

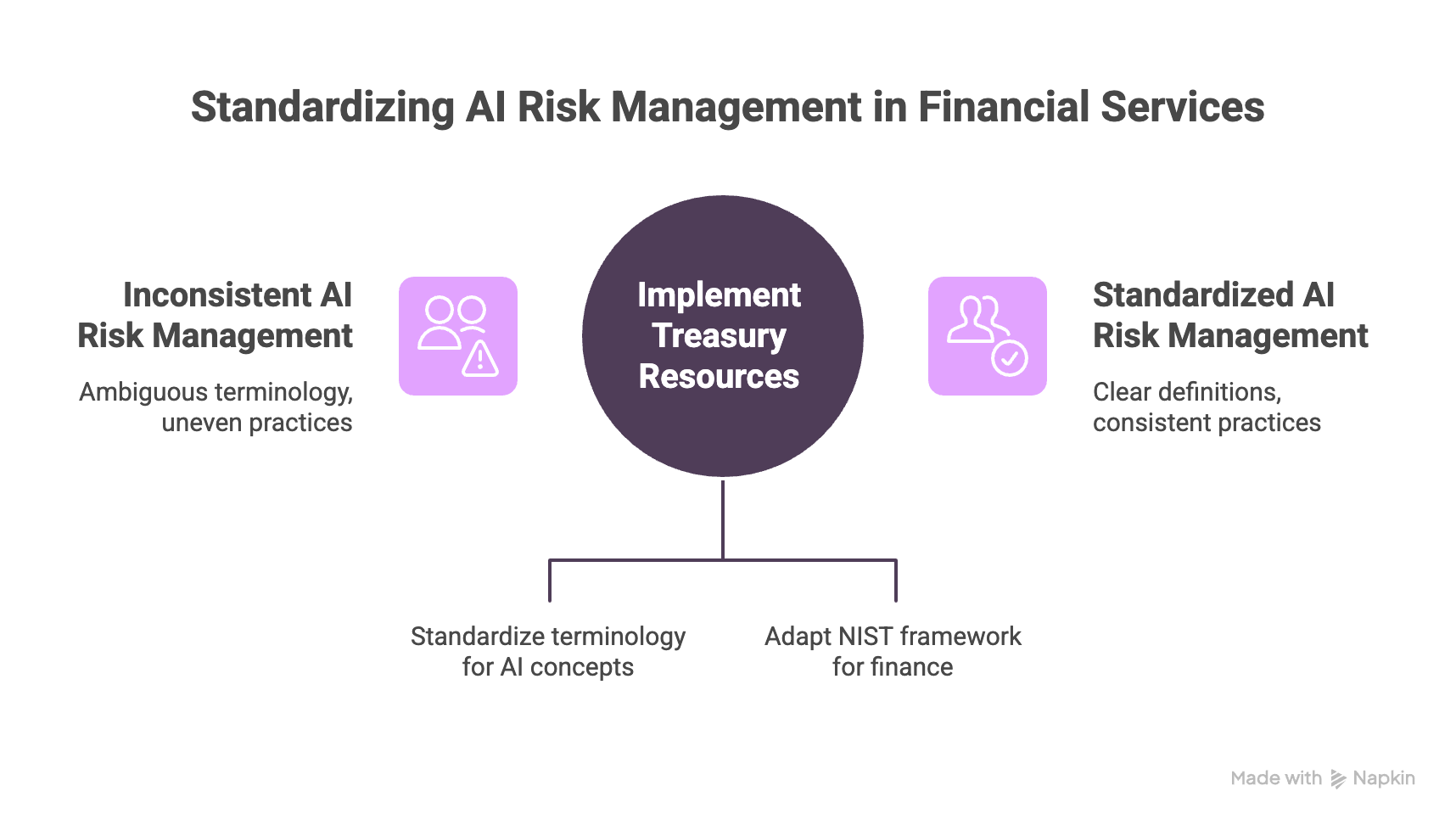

On February 19, 2026, the U.S. Department of the Treasury released two resources aimed directly at financial institutions: a shared AI Lexicon and the Financial Services AI Risk Management Framework, or FS AI RMF. Treasury’s framing is the part that matters. This is not about slowing AI down. It is about removing ambiguity so banks, fintechs, and their vendors can adopt AI faster without losing control of risk, accountability, and operational resilience.

If you read the press release like an auditor, the underlying demand is simple: prove you can govern AI the same way you govern any other system that can impact consumers, money movement, fraud outcomes, or regulatory exposure. Treasury calls out a problem many institutions are already living with: inconsistent terminology and uneven risk management practices make it hard to supervise AI usage consistently across technical, legal, business, and compliance functions. The Lexicon is meant to fix that, because you cannot manage risk if every team is using different definitions for the same concepts.

The FS AI RMF takes the next step. It adapts the NIST AI Risk Management Framework to the realities of financial services, and it is packaged as practical material, not theory. It is described as scalable and flexible for institutions of varying size and complexity, and it was developed through the public private AIEOG effort with FSSCC and the Cyber Risk Institute. That combination is a big signal: the sector is standardizing what “good” looks like before regulators standardize it for you.

So what does this mean for a security or risk leader on Monday morning?

It means the conversation shifts from “Do we allow AI?” to “Where is AI used, what data touches it, what controls fire at the moment of use, and what evidence do we retain?” Frameworks like NIST AI RMF are explicit that AI risk management is socio technical. People, process, and technology all contribute to risk, which is why training alone will never be sufficient.

Here is a practical playbook that aligns with the spirit of Treasury’s release without turning into a multi quarter transformation program.

Start with an AI inventory that reflects reality, not procurement. If your inventory only lists tools your company officially bought, it will miss the largest risk surface: shadow AI usage, browser based copilots, personal accounts, and agent workflows built by teams that move faster than governance. In financial services, the inventory needs to include use cases, owners, data classes involved, the model or vendor, and where the output is used downstream. This is how you separate low risk productivity usage from high risk decision support, fraud triage, customer communications, or anything that could produce adverse outcomes.

.png?width=2096&height=924&name=Treasury%20just%20raised%20the%20bar%20on%20AI%20risk.%20Here%20is%20the%20practical%20playbook.%20-%20visual%20selection%20(1).png)

Next, treat policy as a runtime control, not a PDF. Most governance programs fail because they describe what should happen, but they do not change what actually happens. Employees will paste sensitive snippets, upload documents, or route data into whatever AI tool helps them finish the job. A modern control needs to respond in the moment with outcomes like allow, block, redact, or coach. Coaching matters more than people think because it turns enforcement into behavior shaping rather than punishment, which is how you keep AI usage visible instead of driving it underground.

Then, make audit trails readable and defensible. “We log things” is not evidence. Evidence is the ability to reconstruct an event: who used which AI system, when, what category of data was involved, what control decision occurred, whether anything was redacted or blocked, and what exceptions were approved. This is the difference between a governance story and a governance system. It is also the bridge between security and compliance. Treasury’s release is fundamentally about making adoption safer by increasing clarity and consistency, and that only works when oversight produces records that multiple stakeholders can trust.

Finally, update incident response for AI specific failure modes. Financial services is already strong at fraud response and cyber response. AI blends those worlds. You should assume scenarios like prompt injection, data leakage through copy paste, model output that triggers a bad operational decision, or an agent workflow that took an action outside intended boundaries. The goal is not to create fear. It is to ensure you can detect, contain, and explain what happened. Treasury explicitly links these tools to AI cybersecurity and operational resilience, and this is where those words become operational.

This is exactly where KonaSense sits. Treasury’s release validates a direction the market is converging on: governance, security, and observability have to operate as one control layer across real workflows. KonaSense is built to give security teams visibility into actual AI usage, control that executes at the point of interaction, and telemetry that becomes evidence when auditors, regulators, or incident responders need answers.

Treasury did not publish a ban. It published a baseline. If you are in financial services, the winning strategy is not to slow down AI. It is to adopt AI faster with controls that you can prove